Why your AMS requirements document is setting you up to fail

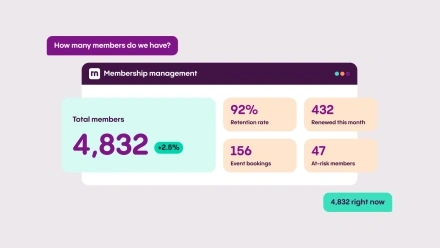

When Andrea Spencer, Director of Communications at the American Association of Professional Landmen (AAPL), began building the case for a new technology platform, the organisation was spending over $200,000 a year on six separate systems — and none of them worked reliably together. Reports required custom queries just to pull basic membership numbers. A correction in a course description had to be made in three different places. And whenever AAPL wanted to make even a small change to its core AMS, the price was prohibitive: one minor addition to the renewal process came with a $30,000 quote. After a decade of this, the team had largely given up asking for improvements and had simply adapted its processes to work around the system’s limitations instead.

That last part is the detail that matters most for any organisation about to begin an AMS selection. Because when the time came to write a requirements document, AAPL — like most associations in the same position — faced a structural problem: the people best placed to define what the new system needed to do were also the people most shaped by what the old one couldn’t. The requirements document that emerges from that starting point tends to describe the past rather than the future.

The backward-looking brief

When a team sits down to define what they need from a new system, the natural starting point is the system they’re already on — what does it do, what doesn’t it do, what workarounds have accumulated over the years, and which of those workarounds would they like to make permanent? The answers to those questions form the backbone of most requirements documents, which is precisely the problem.

The requirements reflect what the organisation has needed in the past, filtered through what the current system was able to deliver — and, crucially, constrained by what the current system couldn’t. Features the old platform never supported often don’t make it onto the list at all, because after years of working without them, nobody thinks to ask. Processes that have become deeply embedded, even where they’re genuinely inefficient, tend to be specified as requirements rather than identified as problems that a better system could eliminate entirely.

A renewal workflow that requires six manual steps may have started as a workaround for a CRM that couldn’t automate payment collection. If that workaround is now documented as a requirement, the selection process will favour the platform that can replicate it — rather than the one that would make it unnecessary.

The question worth asking of every line

The test that most requirements documents skip is a simple one: does this requirement exist because of genuine member need, or because of how our current system works?

Applying that question rigorously to every line can be uncomfortable, because it requires being willing to acknowledge that certain processes exist not because they’re the right way to do things, but because the old system forced them into existence. There’s a meaningful difference between a genuine operational need and an inherited constraint, and being honest about which is which means being equally honest about where the organisation is going, not just where it has been.

AAPL’s approach to its evaluation was explicit about this from the start. Andrea Spencer explained:

We came in saying we're not married to our processes. If we can make things better, that's great. And there were a lot of places where [the ReadyMembership team] said, what if you did it this way? And it was like, yes, let's do that.

That openness — to challenge processes rather than simply specify them — changed what the implementation was able to deliver. AAPL consolidated six platforms into one, eliminated over $200,000 in annual technology costs, and transformed its certification program from a paper-heavy, weeks-long ordeal into a streamlined digital workflow that now takes days. Renewals that once consumed the time of three staff members were reduced to one.

What a better starting point looks like

The alternative to starting with the current system is to start with the member. What are your members trying to do, and what does the platform need to enable for them to do it well? Where do they drop off, give up, or call the office to complete something they should be able to do themselves? What would a new joiner’s first ninety days look like if the platform worked the way it should?

Those questions tend to produce a different kind of requirements document — one that describes outcomes rather than features, and that is explicit about the processes worth keeping versus the ones worth redesigning from scratch.

It also helps to involve more than the team closest to the current system. Membership staff who handle renewals every day will naturally specify the renewal workflow as it exists. But the colleagues fielding calls from members who can’t renew online, or the finance team who manually reconcile payment reports each month, may have a very different view of what genuinely needs to change.

Over-specification has its own costs

There is a related failure mode in the opposite direction. A long list of highly granular requirements, many of them reflecting edge cases or processes that could simply be redesigned, makes it harder to find the right platform. Vendors optimise their responses to match the brief, which means the evaluation ends up comparing how well each platform addresses the specific things you’ve asked about rather than how well it would serve your members and team in practice.

The most productive evaluation conversations tend to happen when the brief is clear about the problem rather than prescriptive about the solution — asking what the organisation is trying to achieve with renewals, what a good member events experience actually looks like, what they would do with real-time reporting if they had it. Those questions invite vendors to show how the platform works, rather than simply confirm that it can do X. As Amber Shaverdi Huston, Executive Director of NACA, put it: “Good service shines when you figure out problems together in partnership and not by just following standard operating procedures.” That kind of conversation is only possible when the brief leaves enough room for it.

Starting in the right place

None of this is an argument against structured evaluation. Comparing platforms on specific capabilities matters, particularly for organisations with complex integration, financial management, or data migration requirements. But those comparisons are most useful once the harder, earlier question has been answered: what is this membership trying to do, and what does the platform need to make possible?

A requirements document that describes problems to solve rather than processes to replicate creates the conditions for a genuinely useful procurement. And that, more than any individual feature comparison, is what tends to determine whether an organisation is still satisfied with its platform three years after going live.

If you’re beginning an AMS selection and want to work through the right questions before writing the brief, talk to our team.